Physics vs. Centralization: Why the Hyperscaler Model Fails Real-Time AI

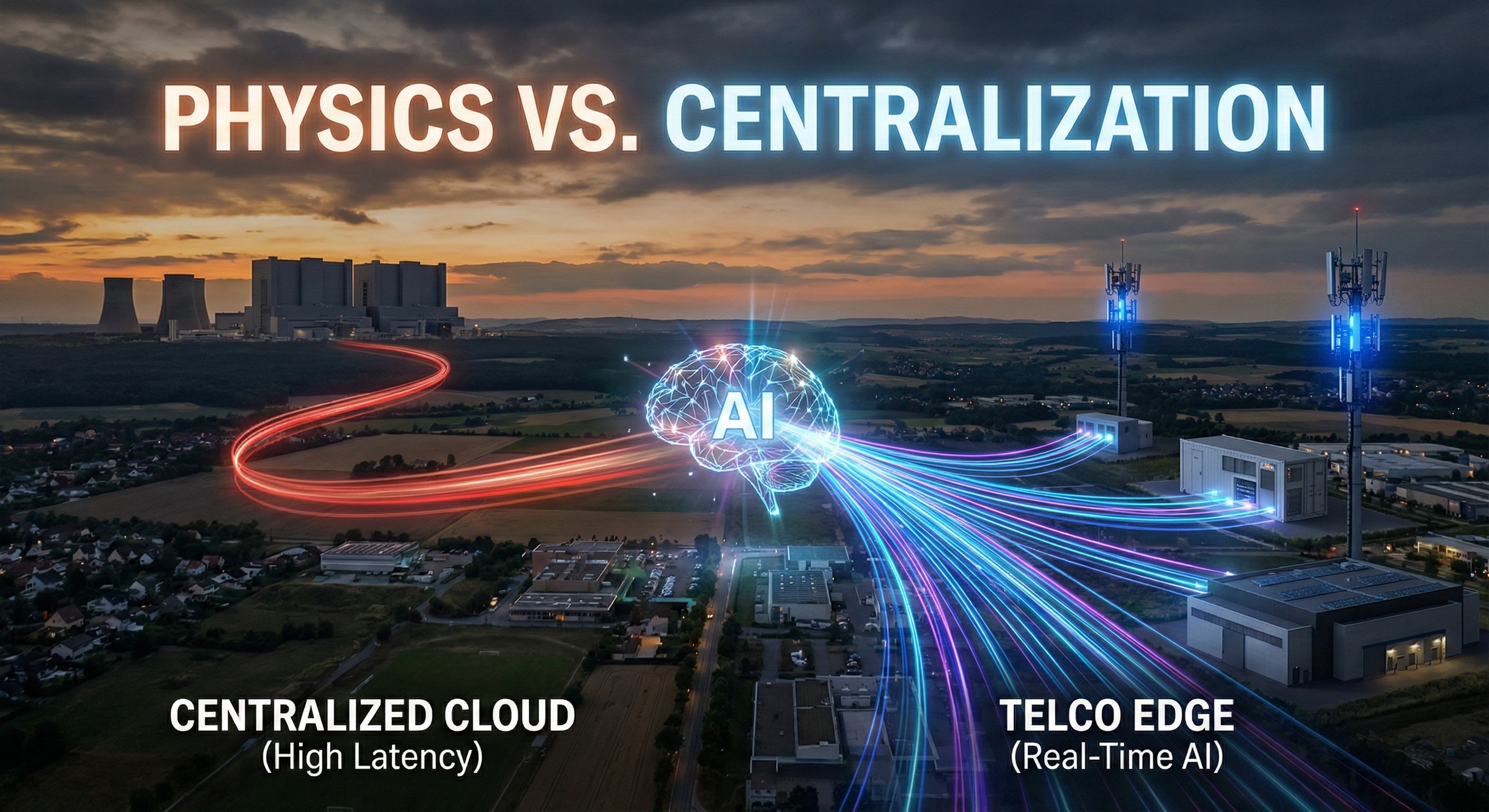

Centralized clouds cannot beat the speed of light. As AI moves from training to real-time inference, discover why sub-20ms latency and data sovereignty form the physical and legal moat telcos must use to win the enterprise edge.

Swarmio Team

Technical Analyst

Table of contents

Share

Physics vs. Centralization: Why the Hyperscaler Model Fails Real-Time AI

Executive Summary:

The era of centralizing all workloads in massive hyperscaler data centers is fracturing under the physical constraints of latency and the legal constraints of data sovereignty.

Real-time AI, gaming, fintech, and automation require sub-20ms response times that centralized clouds cannot reliably deliver outside core regions.

Physics and regulation are pushing compute to the edge.

By deploying a monetization layer like Swarmio Core, telcos can activate their dormant edge infrastructure to deliver Sovereign AI and ultra-low latency inference, creating a high-margin revenue stream that hyperscalers cannot easily replicate.

Introduction: The End of the Cloud Monoculture

For the past fifteen years, the technology industry has operated under a powerful, albeit flawed, assumption: that the centralization of computing power in massive, hyperscale cloud facilities is the ultimate endpoint of digital architecture. The "Cloud Monoculture," dominated by a handful of global technology giants, has dictated how enterprises build, deploy, and scale their applications. This model was built on the premise that economies of scale would always trump the need for geographic proximity.

Through the early phases of the Artificial Intelligence boom, this centralized model appeared validated. Training foundational Large Language Models (LLMs) requires gigawatts of power and tens of thousands of clustered GPUs operating in tandem. This brute-force, batch-processing phase of AI is uniquely suited to the hyperscaler environment.

However, as we move through 2026, the AI lifecycle is shifting from the laboratory to the physical world. We are moving from training models to deploying them—a phase known as AI inference. And in the realm of real-time AI inference, the centralized hyperscaler model is colliding with an immovable adversary: the speed of light.

For telecommunications executives, this collision represents the most significant commercial opportunity in a generation. The structural limitations of centralized public clouds are creating a massive, unfulfilled demand for localized compute. Telcos hold the keys to the distributed infrastructure required to solve this crisis, but capturing the value requires a fundamental shift from providing basic connectivity to orchestrating a revenue-ready edge cloud.

The 20-Millisecond Barrier: Why Centralized AI Fails the Real-Time Test

To understand why the hyperscaler model fails real-time AI, we must look at the hard physical limits of data transmission.

When an enterprise relies on a centralized cloud, user requests often travel hundreds or thousands of miles over a Wide Area Network (WAN) to reach a data center, be processed, and return. In the context of traditional web browsing or batch data analytics, a round-trip latency of 100 to 300 milliseconds is perfectly acceptable. But modern digital economies are no longer built solely on asynchronous web traffic.

Today's most critical workloads are fiercely latency-sensitive. Real-time AI, gaming, fintech, and automation require sub-20ms response times that centralized clouds cannot reliably deliver outside core regions.

Consider the implications across modern enterprise use cases:

Autonomous Systems and Robotics: In industrial manufacturing, an AI-driven quality control system or an automated robotic arm must detect anomalies and execute safety triggers in milliseconds. A 100-millisecond delay caused by a round-trip to a distant cloud could result in catastrophic equipment failure or human injury.

Conversational AI and Voice Agents: As voice-based AI agents become the standard for customer service and enterprise workflows, latency becomes the primary bottleneck for user experience. Human conversation naturally operates with near-instantaneous reaction times. If an AI agent takes more than a few hundred milliseconds to process inference in a distant cloud, the interaction feels jarring, robotic, and untrustworthy, leading to severe drop-offs in commercial funnels.

Financial Services: Fraud detection algorithms must authorize or block transactions at the point of sale in the blink of an eye.

Gaming: Swarmio Gaming is a revenue-generating, latency-critical vertical built on Swarmio Core. In competitive multiplayer gaming, a difference of 20 milliseconds is the difference between winning and losing, making centralized hosting entirely unviable for elite digital experiences.

Latency is a physical limit. You cannot code your way out of the speed of light, and you cannot build a fiber-optic cable that defies the laws of physics. The only solution is to physically move the processing power closer to where the data is generated and consumed. Physics and regulation are pushing compute to the edge.

The Sovereignty Mandate: When Centralization Becomes a Liability

While physics dictates the necessity of edge computing, global regulatory shifts are making it a legal requirement. In 2026, the concept of "data sovereignty" has evolved from a niche policy discussion into a rigid, non-negotiable compliance standard.

Governments, healthcare organizations, and financial institutions are acutely aware of the risks associated with routing their most sensitive, mission-critical data through foreign-owned, centralized cloud infrastructure. The geopolitical climate has accelerated the demand for digital autonomy. Nations are aggressively pushing legislation that requires data to be processed and stored within their own borders, free from the jurisdictional reach of foreign authorities.

Data sovereignty is non-negotiable. Regulations increasingly mandate local processing of sensitive data, making centralized and foreign cloud models impractical or non-compliant.

This regulatory environment creates a massive vulnerability for the hyperscaler business model. A hyperscaler cannot simply build a massive, 100-megawatt data center in every single jurisdiction, province, or mid-sized city to satisfy local data residency laws. The economics of their centralized model prohibit it.

Conversely, this creates a profound structural advantage for domestic telecommunications operators. Telcos already possess the distributed real estate, the regional data centers, the Multi-access Edge Computing (MEC) nodes, and the localized fiber networks required to keep data strictly within sovereign borders. They have the trusted brands and the regulatory approvals already in place.

The Telco Opportunity: Sovereign AI Workloads at the Edge

The convergence of sub-20ms latency requirements and strict data sovereignty laws creates a perfect storm of demand. AI is becoming a real-time, data-resident workload at the edge.

This is where the telecom industry must aggressively pivot. Rather than attempting to compete with hyperscalers on cheap, centralized storage or batch computing, telcos must corner the market on premium, localized AI inference.

Through platforms like Swarmio, operators can capture this specific, high-value demand. Swarmio AI turns telco edge infrastructure into sovereign, low-latency AI services for enterprises and governments. This involves transitioning from a connectivity provider to a managed service provider offering discrete, highly profitable AI products:

AI Inference as a Service: Fully managed AI inference on telco-edge GPUs. This allows enterprise developers to deploy their trained models directly into the telco's local edge environment, guaranteeing millisecond response times without the overhead of managing the underlying hardware.

Sovereign & Compliant by Design: Data-resident AI, aligned with sovereignty and regulation. By leveraging the telco's domestic footprint, enterprises can process highly sensitive healthcare, financial, or government data without it ever crossing a national border or touching a public internet exchange.

Usage-Based AI Monetization: Usage-based AI revenue, metered directly by Swarmio Core. This allows telcos to move away from rigid, flat-rate connectivity contracts and capture the exponential upside of AI consumption, billing enterprises for the precise amount of inference compute they utilize.

The Execution Gap: Why Infrastructure Alone Isn't Enough

If the market demands localized AI, and telcos own the localized infrastructure, why hasn't the telecom industry already dominated this space?

The answer lies in the execution gap between raw hardware and developer experience. Having empty racks, power, and a 5G antenna at an edge location does not make an operator a cloud provider. Enterprise developers are accustomed to the frictionless experience of public clouds. They expect unified APIs, automated provisioning, elastic scalability, and dynamic orchestration.

Historically, telco infrastructure has been heavily siloed. Managing workloads across a fragmented footprint of regional data centers, scattered MEC nodes, and bare metal servers has been an operational nightmare, requiring heavy integration work and long, manual sales cycles. Demand is already ahead of infrastructure. Developers are building latency-sensitive applications today, but centralized deployment models cannot keep pace with where users and data reside.

To bridge this gap, telcos cannot rely on their legacy operational support systems (OSS) or business support systems (BSS). They require a specialized, software-defined monetization layer that abstracts the complexity of the edge and presents it as a unified, cloud-like product.

The Secret Weapon: Patented Predictive Orchestration

The true competitive advantage in the edge computing race is not just the physical location of the servers, but the intelligence used to route workloads between them. As AI inference scales across thousands of distributed telco nodes, manually determining where a specific computation should execute becomes impossible.

This requires advanced orchestration capabilities that can dynamically assess network health, server capacity, and latency requirements in real-time. This is the core technological differentiator of the Swarmio platform.

Swarmio relies on Patented Predictive Orchestration. This technology features AI-driven workload placement that anticipates latency and capacity conditions across distributed edge infrastructure. This critical intellectual property is protected by an issued U.S. patent: US 11,063,881 B1.

By utilizing predictive orchestration, the telco edge transforms from a collection of static servers into a breathing, intelligent fabric. If a sudden spike in gaming traffic occurs in a specific metropolitan area, the Swarmio Core can automatically anticipate the capacity strain and route non-critical workloads to a neighboring regional data center, ensuring that the ultra-low latency requirements of the gamers are strictly maintained. This zero-touch automation is what allows telcos to offer enterprise-grade Service Level Agreements (SLAs) at the edge, matching the reliability of hyperscalers while vastly outperforming them on speed and sovereignty.

Reclaiming the Edge: A Strategic Imperative for 2026

The era of hyperscaler dominance was built on the centralization of data. The next era of digital innovation—driven by real-time AI inference, immersive gaming, and autonomous systems—will be built on its decentralization.

For telecom executives, the strategic mandate is clear. The industry must stop treating its distributed infrastructure as a mere conduit for someone else's cloud services. The physical and regulatory constraints of 2026 have handed telcos a structural monopoly on low-latency, sovereign compute.

However, this monopoly is useless without the right software to productize it. By adopting a comprehensive monetization layer—one equipped with unified billing, zero-touch provisioning, and patented predictive orchestration—telcos can rapidly convert their bare metal and MEC locations into revenue-ready, high-margin edge cloud products.

The centralized cloud was never meant to do everything. It is time for telcos to reclaim the edge, bringing AI out of the distant data center and into the real world where it belongs.

References & Further Reading:

Swarmio Inc. Proprietary Technology: Patented Predictive Orchestration (US 11,063,881 B1)

Akkodis (2026): Cloud Was Never Meant to Do Everything

Innovation, Science and Economic Development Canada (2026): Enabling large-scale sovereign AI data centres

JLL & CBRE Market Reports (2025/2026): AI Edge Data Centers: Powering Real-Time AI